|

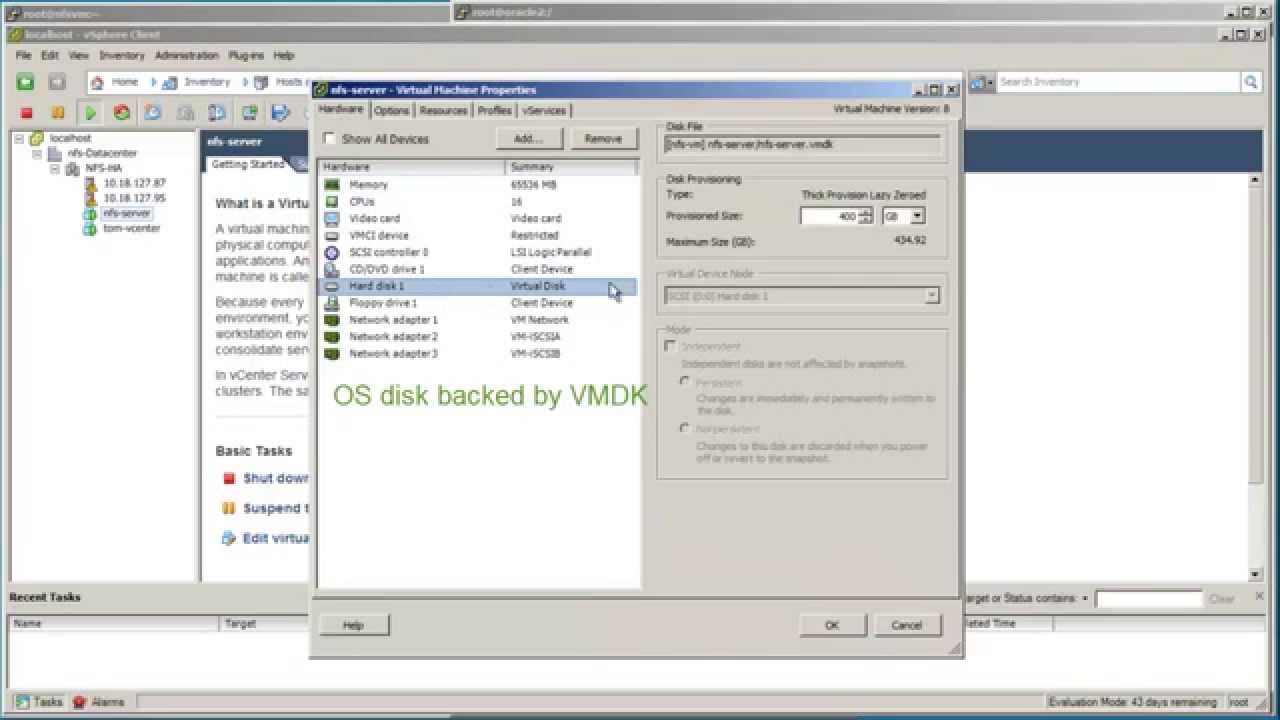

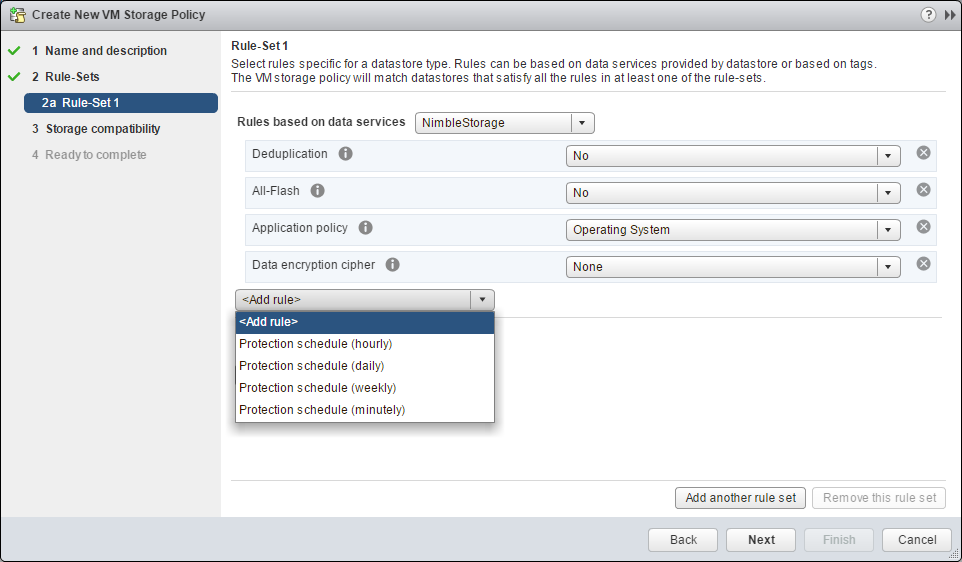

12/7/2023 0 Comments For vmware nimble san setupSome of the images have been provided by Rich Fenton, one of Nimble’s Sales Engineer from UK. Those were all steps required to get your FC array connected to the an ESXi host, once your zones on your FC switches are configured. The volume can now be used as a Raw Device Map or a Datastore. On the array I can now see all 8 paths under Manage -> Connections. The Active(I/O) paths are going to the active controller and the Standby paths are for the standby controller. I have 4 Active(I/O) paths and 4 Standby paths. The PSP should be set to NIMBLE_PSP_DIRECTED. Looking at the path details for LUN 87, you can 8 paths (2HBA’s x 4 Target Ports). Now, the volume is created on the array and your initiators are set-up to allow connection from your FC HBA on the host to connect.Īfter a rescan of the FC HBA, I can see my LUN with the ID 87. If you don’t have an initiator group yet, click on New. Next, select the initiator group which has the information of your ESXi host. Specify the Volume Name, Description and select the proper Performance Policy for proper block alignment. Go to Manage -> Volumes and click on New Volume. Specify any protection schedule if required and click on Finish to create the volume. First, go ahead and create a new volume on your array. Next, specify the size and reservation settings for the volume. This will allow your hosts to connect to the newly created volume.Īlso, specify a unique LUN ID. Name your new initiator group and specify the WWNs of your ESXi hosts.

If you don’t have an initiator group yet, click on New Initiator Group. I am especially interested in issues that. This array is 100 dedicated to VMware shared storage and 100 FC (not FCoE.) The switches were purchased as a bundle with the SAN, and the hosts are HPE Proliant Gen9. Ive downloaded it to the Nimble, but have not applied it yet. Go to Manage -> Volumes and click on New Volume. Fairly new to HPE Nimble, so this will be our first software update. A folder can optionally impose a capacity limit for volumes which. This post is just to share my experience and design with setting up a 4 host VMware vSphere 6.5 Cluster with iSCSI SAN and 10G switching. Nimble folders are used to group volumes together for a common purpose. When a vVols managed folder is created Nimble automatically creates a vVols Storage Container. In vVols speak, a folder is essentially a storage container.

The steps are fairly similar to integrating a Nimble iSCSI array but there are some more FC specific settings which need to be set.įirst, go ahead and create a new volume on your array. Nimble OS 3 introduces the concept of folders. The plugin is compatible with both the vSphere web client and the vCenter thick client. but we dont use it.This post will cover the integration of a Nimble Storage Fibre Channel array in a VMware ESXi environment. The HPE Nimble Storage vCenter plugin offers vSphere administrators a single familiar user interface for easily managing their HPE Nimble Storage environment and their virtual compute environment. Same we using when also Veeam is running in a VM because the Backup from SAN is only supportet with a phys. Backup Server is CPU limited we use a Veeam Proxy (VM) on every Host (in larger environments) and using "Hot Add" rather than Backup from SAN. Method 2: Two or more vmnics per vSwitch, and one dedicated VMkernel port. You can use two connection methods to achieve HA and load distribution: Method 1: One vmnic per vSwitch, and one VMkernel port per vSwitch. Yes, i have customer with Veeam Enterprise Plus with NetApp NFS or other supportet "Snapshot from SAN" systems and this gives a huge benefit. The VMware software iSCSI initiator is the preferred means for connecting to HPE Nimble Storage arrays by using the iSCSI protocol. If your "admin" clicks initialize your Datastore are start dying. Yes, we set "automount disable" but that only prevents the popup in the Windows Storage manager. The LUNs are "visible" and "ready to initialize" on the Windows Backup Server. It depends on the customer if i suggest letting a Windows server access the ESXi Datastores directly on the SAN. The vSphere Hardening Guide points that Chap BI-Directional should be used.

We dont use ACLs on IQN (only in the early days) or IP based. we can be sure that all hosts get access.

Same way when creating a new Volume on the storage and presenting the LUNs to the hosts. Every ESXi Cluster have its own "Password" so when we ever add a new host to a cluster we got access to all LUNs the cluster needs to have. We use Chap Mutal for a long time in our EqualLogic setups.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed